Mistral: Voxtral TTS, Forge, Leanstral, & what's next for Mistral 4 — w/ Pavan Kumar Reddy & Guillaume Lample

Mistral is one of the world's leading frontier model labs, and has just launched Voxtral TTS, their latest step in their strategy to offer open frontier intelligence for every modality.

This post originally appeared in Latent Space.

“We’re still very far from saturating pre-training.”

Mistral has been on an absolute tear - with frequent successful model launches it is easy to forget that they raised the largest European AI round in history last year. We were long overdue for a Mistral episode, and we were very fortunate to work with Sophia and Howard to catch up with Pavan (Voxtral lead) and Guillaume (Chief Scientist, Co-founder) on the occasion of this week’s Voxtral TTS launch:

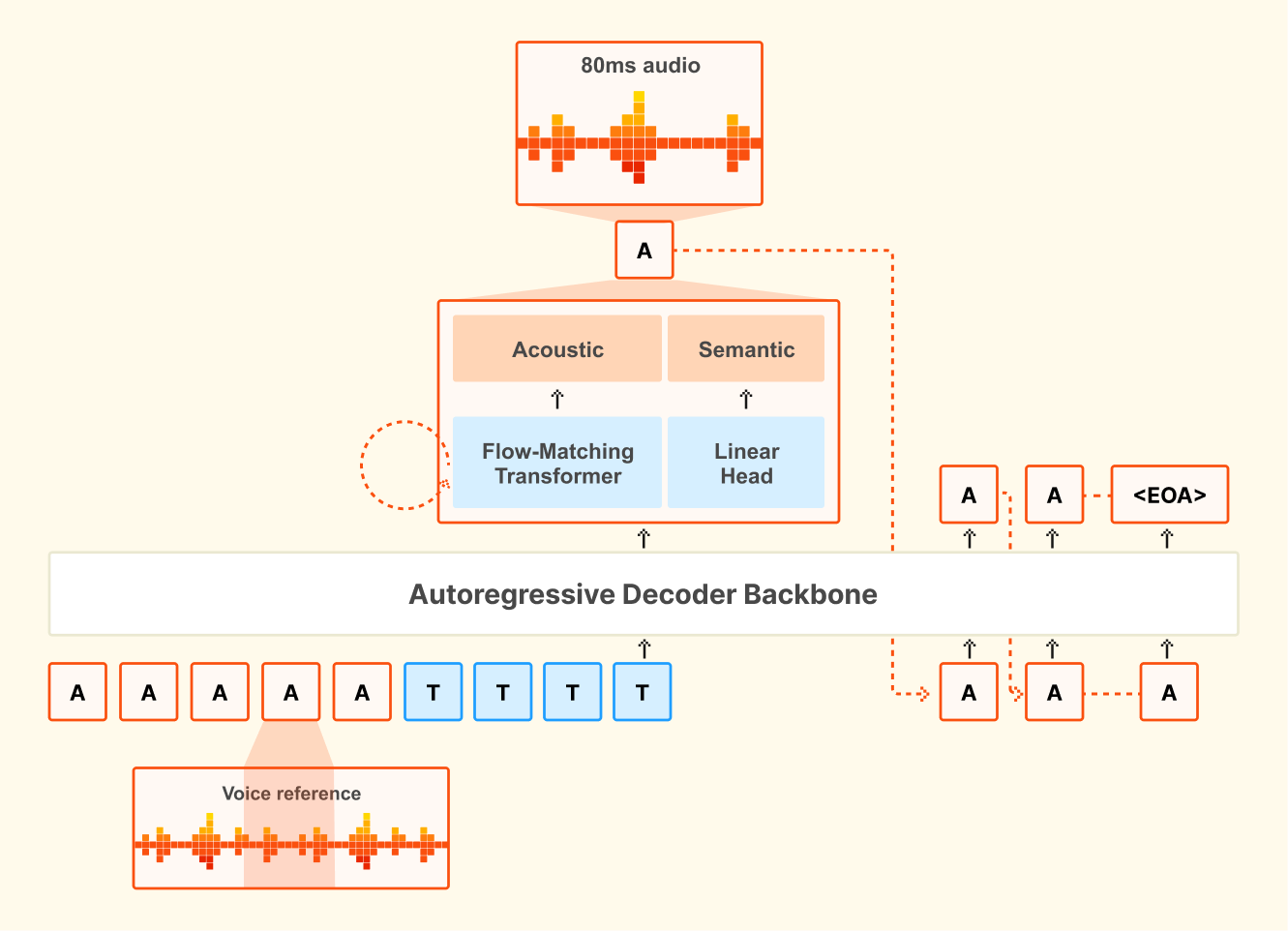

Mistral can’t directly say it, but the benchmarks do imply, that this is basically an open-weights ElevenLabs-level TTS model (Technically, it is a 4B Ministral based multilingual low-latency TTS open weights model that has a 68.4% win rate vs ElevenLabs Flash v2.5). The contributions are not just in the open weights but also in open research: We also spend a decent amount of the pod talking about their architecture that combines auto-regressive generation of semantic speech tokens with flow-matching for acoustic tokens (typically only applied in the Image Generation space, as seen in the Flow Matching NeurIPS workshop from the principal authors that we reference in the pod).

You can catch up on the paper here and the full episode is live on youtube!

Keep reading with a 7-day free trial

Subscribe to SAIL Media to keep reading this post and get 7 days of free access to the full post archives.