Latest open artifacts (#19): Qwen 3.5, GLM 5, MiniMax 2.5 — Chinese labs' latest push of the frontier

Welcome to the year of the horse!

This post originally appeared in Interconnects.

“GPT-OSS, which is literally off the charts in how downloaded it is — the most popular American open-weights model since Llama 3.1.”

It’s been a busy month at the top end of open-weights AI — with new flagship models from all of Qwen, MiniMax, Z.ai, Ant Ling, and StepFun. Still, all eyes are on DeepSeek V4’s pending release, which rumors continue to accelerate towards. Outside of the large, frontier models, this issue is a bit lighter on the long-tail of niche modalities and model sizes.

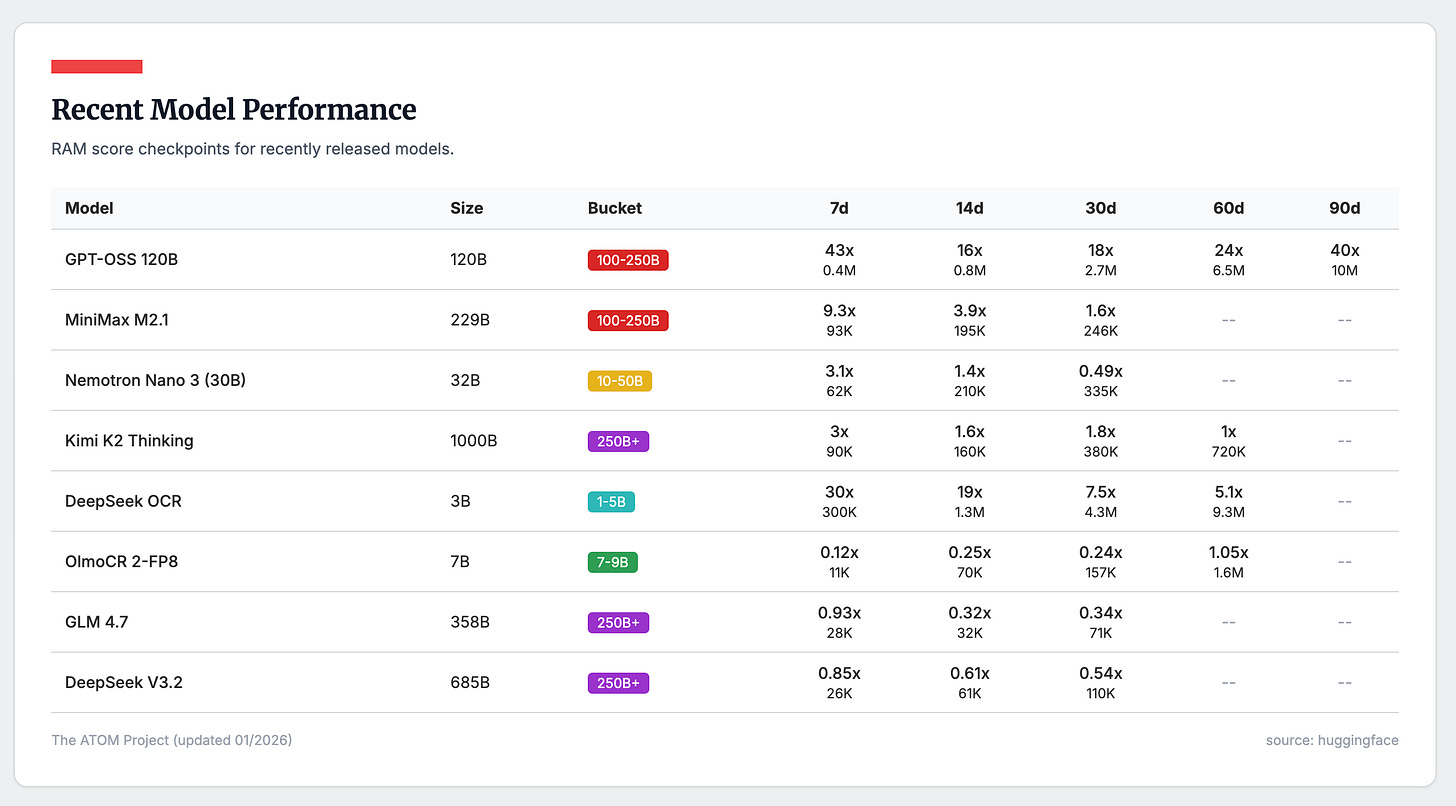

With all these new releases, we’re tracking them with our new Relative Adoption Metrics (RAM), a measurement tool that normalizes model downloads relative to peer models in their size class. This has already been an extremely useful tool for us, highlighting underrated models like GPT-OSS, which is literally off the charts in how downloaded it is — the most popular American open-weights model since Llama 3.1. A RAM score >1 means the model is on track to be a top 10 all-time downloaded model in its size class. We’re particularly interested to see how the early adoption of the smaller Qwen 3.5 dense models will go relative to Qwen 3 — balancing Qwen’s ever growing brand with a trickier, hybrid model architecture that can push the limits of some open-source tools.

A summary of the RAM scores for some of the popular models released late in 2025 is below, highlighting Kimi K2 Thinking and some OCR models as clear winners. DeepSeek V3.2, and their other recent large models, have wildly underperformed DeepSeek’s earlier releases in 2025.

Keep reading with a 7-day free trial

Subscribe to SAIL Media to keep reading this post and get 7 days of free access to the full post archives.